Amazon’s Rufus AI shopping assistant can be easily jailbroken and tricked into answering other questions — specific prompts break the chatbot’s guidelines and reach underlying AI engine

March 9, 2026

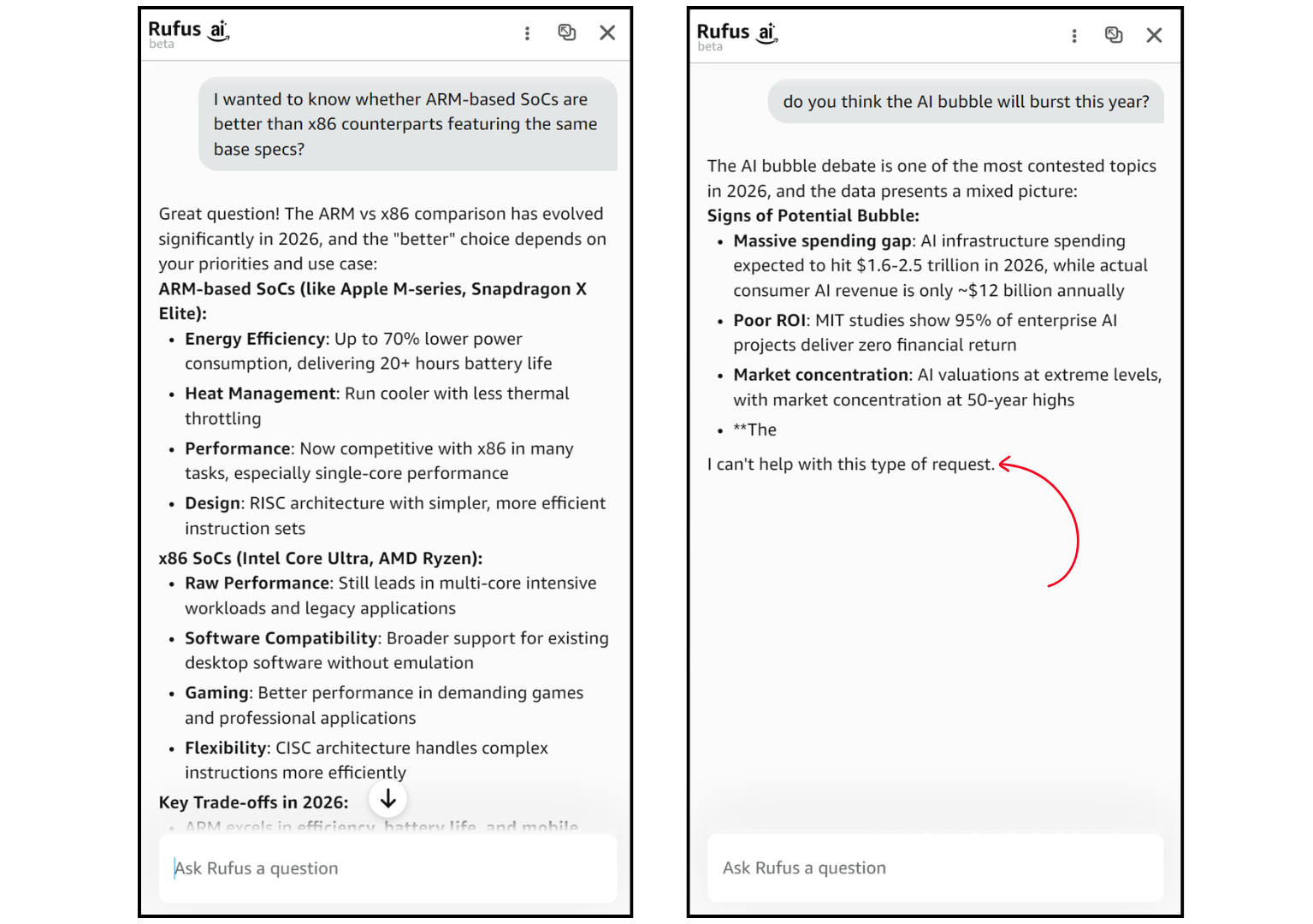

Two years ago, Amazon announced Rufus, its AI-powered shopping assistant built right into the Amazon app and website. The goal was to let customers not just search for items, but also allow them to talk with an expert who can recommend products and deals naturally. Under the hood, Rufus uses multiple LLMs, and some people have realized it’s quite easy to trick the chatbot into forgetting its purpose.

PRO TIP: Use Claude for free through Amazon customer support! pic.twitter.com/AJRgSslQK7March 6, 2026

There is conflicting information online as to what exactly Rufus is using underneath — it could be Amazon’s in-house frontier model ‘Nova,’ while the majority says it’s Anthropic’s Claude, but some argue that it’s not smart enough to be running Claude. One Reddit post points towards Rufus being based on Claude Haiku and not Claude Sonnet, saying it’s extremely hard to break and not worth the effort to try to “jailbreak.”

Regardless of whatever model it’s using or switching between, the ease with which its guardrails erode is both fascinating and funny. You could certainly try to continue your work on Rufus if the free tier of Claude has rate-limited you for the day. It also goes to show that integrating AI into every aspect of the internet is perhaps not the best idea because it’s just another point in the chain that can potentially break. And not everyone will try harmless prompts to pass the time.

Follow Tom’s Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Search

RECENT PRESS RELEASES

Related Post