Every AI Query Damages the Environment: Here’s How – M-A Chronicle

April 26, 2026

2.5 billion. That’s the number of requests ChatGPT receives in a day as of July 2025. With the sheer scale of people plugging in prompts to generative artificial intelligence (AI), the environmental impact has become increasingly significant.

Considering projections that climate change could be irreversible by 2030 if significant change doesn’t occur, environmentalists are particularly worried. Even among the general public, 40% of U.S. adults report being extremely concerned about AI’s environmental impact.

On social media, some users leave angry comments on AI videos, begging people to stop using AI because of the water consumption for cooling data centers. However, many argue that platforms like YouTube and TikTok use similar amounts of water, and other industries are more significant polluters. Others aren’t particularly concerned about the environment, are optimistic about environmentally-friendly models, or argue that widespread AI use is necessary, regardless of the environmental cost.

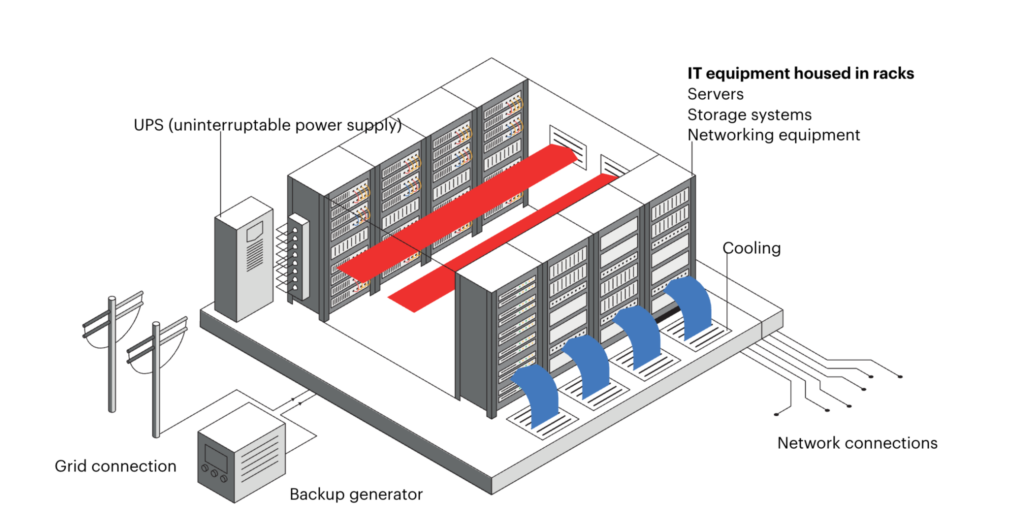

AI requires water to cool computer processor chips in data centers and to prevent overheating, fires, and damage. This can become particularly problematic at a large scale, as the cooling must use fresh water since saltwater can corrode computer equipment and leave residue that clogs pipes.

Because of the novelty of AI, variations in the amounts of water different companies use, and information being largely unavailable, most statistics about water usage are estimates. Numbers also vary based on the calculation method, as solely focusing on data center cooling versus additional water needed for electricity and manufacturing chips and other equipment makes a large difference.

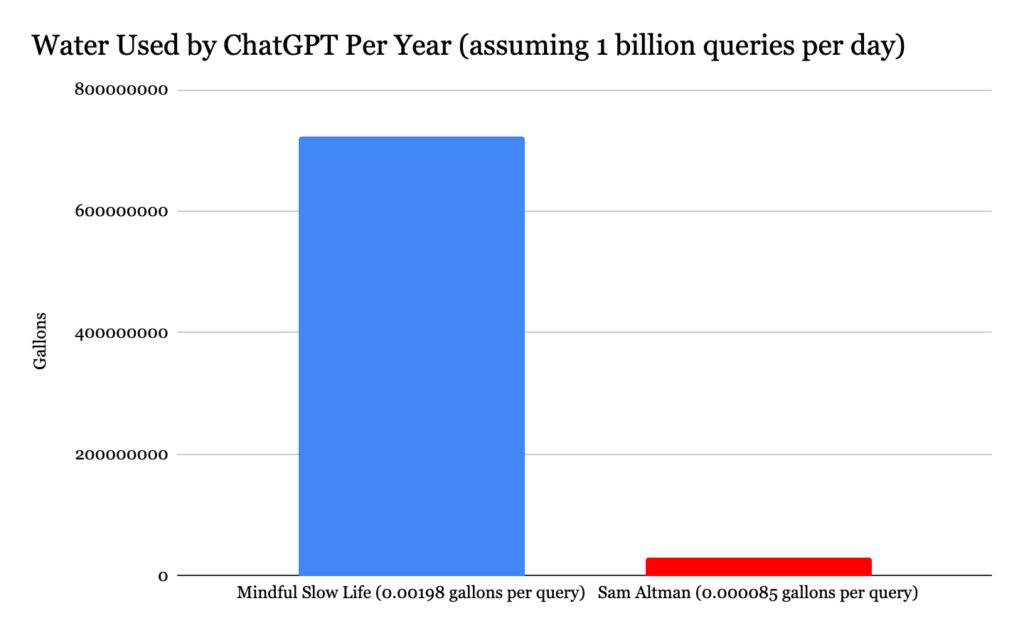

Including electricity production and data center cooling, ChatGPT uses around 7.5 million liters daily (assuming one billion queries a day), or 1,981,290 gallons. That’s about 15 million standard water bottles.

However, with recent ChatGPT models designed to be more water-efficient, in June 2025, OpenAI CEO Sam Altman claimed that a single query uses only 0.3 milliliters of water. This number refers to operational water, used solely for cooling, but not water used for electricity or the manufacturing of parts. This would result in 300,000 liters of water per day (600,000 water bottles), assuming one billion queries—a large deficit compared to the 7.5 million estimate.

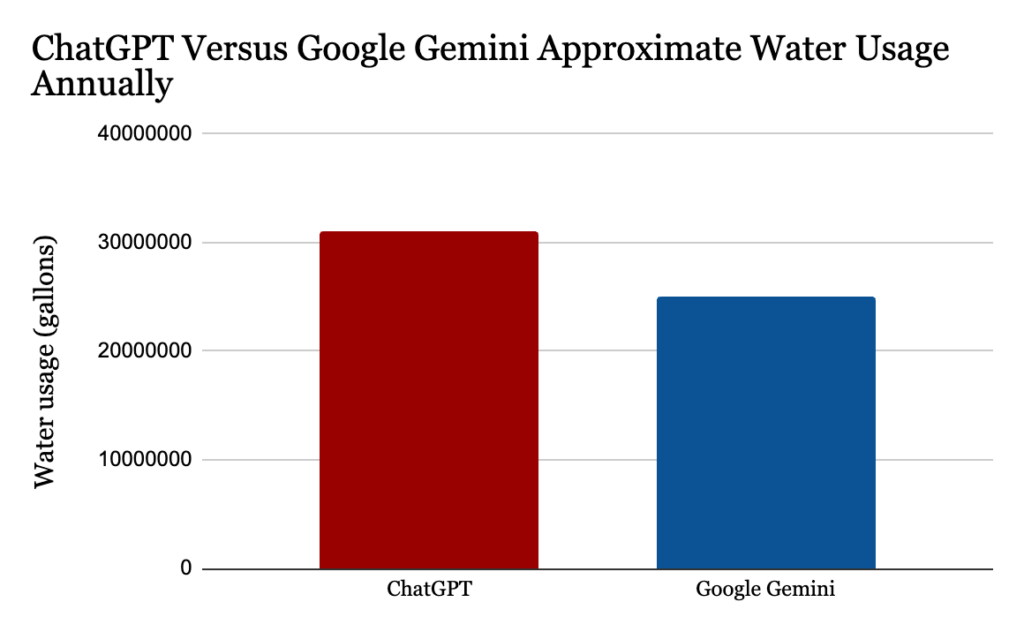

Using Altman’s estimate, ChatGPT uses around 31,021,715 gallons of water per year. However, Mindful Slow Life’s (MSL) estimate results in about 723,171,000 gallons of water used per year, which accounts for electricity and infrastructure.

To compare, Altman’s estimate is equivalent to around 47 Olympic-sized swimming pools per year, while MSL’s is around 1096. However, these numbers become even murkier considering that certain queries, like generating pictures, require more computing and more water than a simple text prompt.

But a major caveat arises here—other industries waste much more water a year. Take the agriculture industry, which wastes roughly 40% of the world’s water annually due to inefficient farming practices. That number is huge, considering the industry uses two quadrillion gallons globally each year, accounting for 70% of worldwide water use. A single hamburger patty requires about 460 gallons of water, about 5 million times the amount of operational water needed for a ChatGPT query.

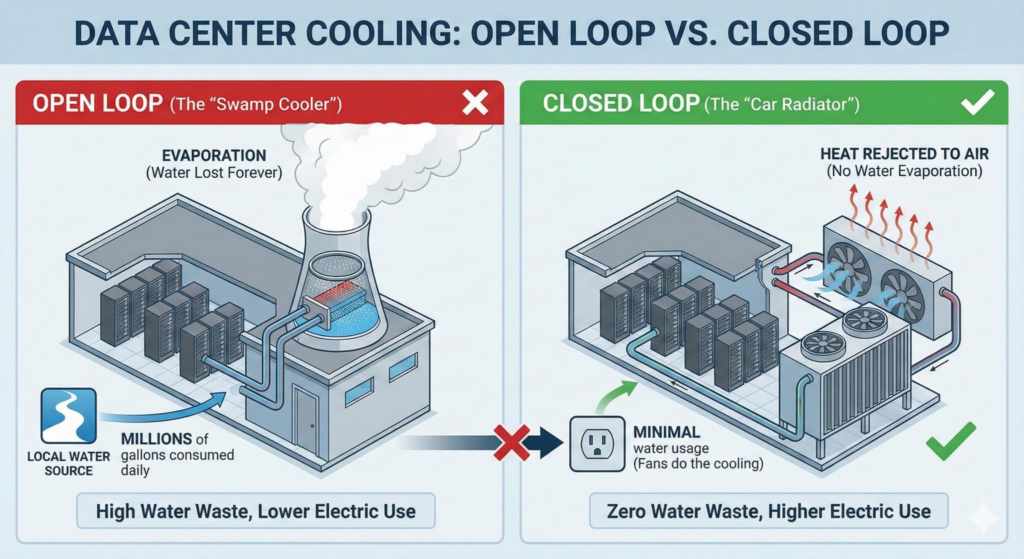

Microsoft manages OpenAI’s data centers, using three types of cooling systems: evaporative, air, and closed-loop. Evaporative cooling converts liquid water into vapor by absorbing heat from the surroundings and is the largest water user. Air cooling uses outside air in colder climates, relying on little to no water. Closed-loop cooling is a newer, more advanced method that aims to remove excessive water loss from the equation.

The method begins with cold water being circulated near hot computer chips, and then flowing through a radiator that removes the heat, allowing the water to be constantly reused. However, this method isn’t foolproof; the heat still has to be released to the surroundings.

Evaporative cooling is responsible for a large portion of water waste, and it was once more widely used. However, many companies that provide generative AI services are shifting to the closed-loop method because it is more effective for larger-scale cooling and more efficient overall. For example, an article by Microsoft from December 2024 states that, moving forward, many new data centers will use closed-loop cooling, which would likely include those powering ChatGPT.

In terms of water, the agriculture industry produces the largest footprint by far. But this doesn’t mean AI’s contribution isn’t still significant, given its exponential growth in use, the water required for electricity and infrastructure, and the cumulative impact of numerous companies offering easily accessible generative AI. Though the numbers are unclear, the fact that one AI company alone could be wasting millions of gallons of water a year is cause for concern.

Ideally, data centers would be located in areas far from humans to minimize the immediate effects. However, because they require access to an electricity grid, they are often on the outskirts of cities and towns. And so, as a result, the heat from cooling centers doesn’t just disappear into a vacuum—the nearby areas have to pay the price.

In a March 2026 study, researchers surveyed over 6,000 data centers and found that surface temperatures around data centers increased by 3.6 to 16 degrees Fahrenheit after operations began. Alarmingly, the temperature increase even reached 6.2 miles away.

Additionally, data centers use backup generators that run on diesel during power outages, also contributing to emissions. Nitrous oxide and carbon dioxide are released into the atmosphere, which trap heat and contribute to global warming. Nitrous oxide is 270 times more effective at trapping heat than carbon dioxide and can pose serious threats to human respiratory health, including causing asthma and infections.

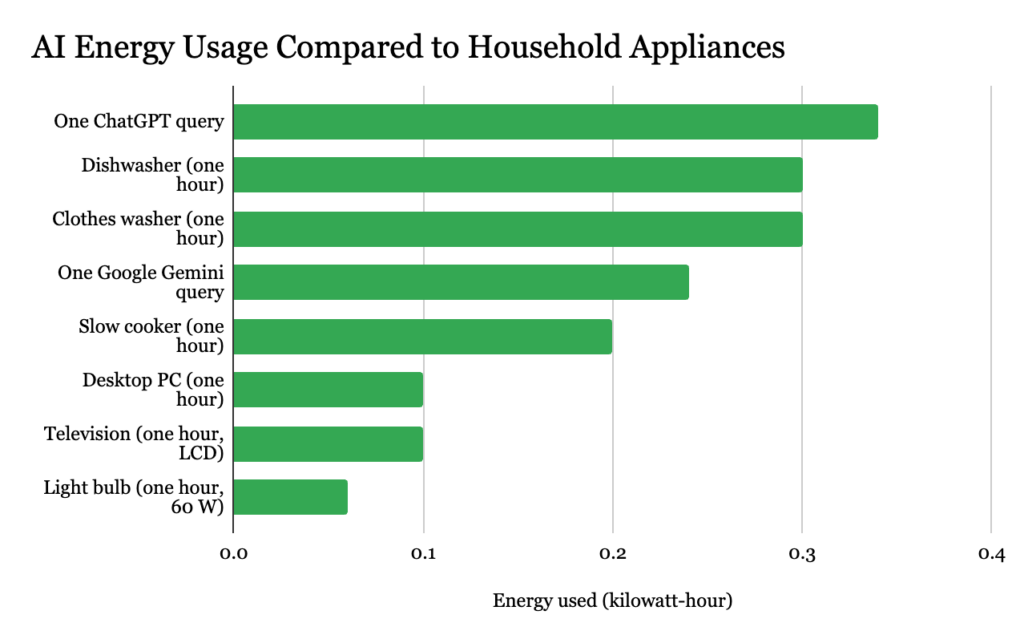

In 2025, Altman stated that one ChatGPT query uses 0.34 watt-hours of electricity. That’s equivalent to the energy needed to power a laptop for around three hours, or a ceiling fan for 10 hours. Using an estimate of one billion ChatGPT queries per day, the platform could use around 340 million watt-hours daily, enough to power 32 average households for a year.

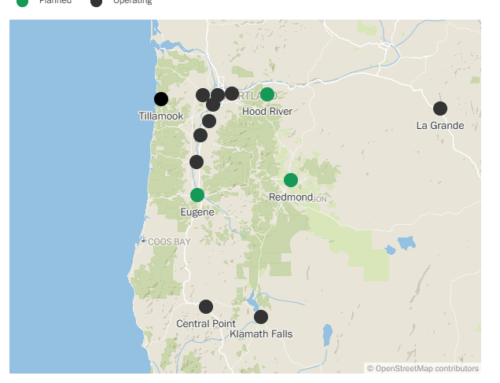

AI’s data centers require significant amounts of electricity sourced from grids that are often powered unsustainably by natural gas or coal. As of 2024, only 24% of U.S. data centers used renewable energy sources. However, it varies by region, and in California, power grids are generally more sustainable, frequently relying on solar and wind energy generation.

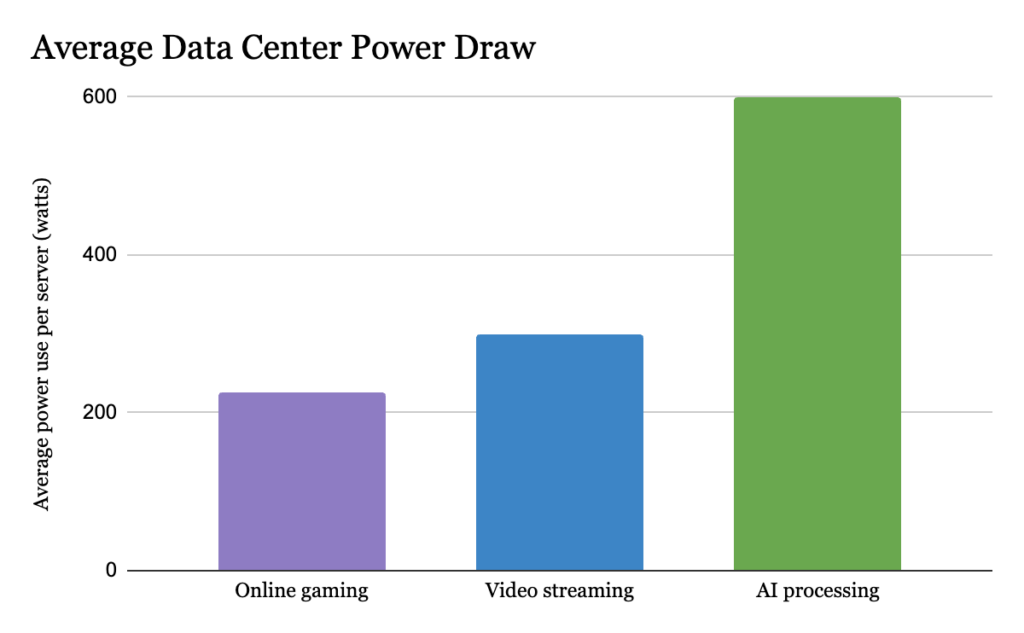

AI training and services have revolutionized power demand. While data centers that support online gaming and streaming typically require 200 to 400 watts of power per server (a single computer in a data center), AI processing requires over 600 watts.

Data centers make up a very small fraction of worldwide electricity consumption and emissions, accounting for only 1% of global electricity use in 2024. However, numbers are expected to grow as time goes on. In 2024, data centers used 460 Terawatt-hour (TWh), and the IEA predicts that they will require 1,300 TWh by 2035, equal to about a third of the U.S.’s energy consumption.

People don’t need to completely abandon generative AI. Its issue lies in its magnitude. AI, like most things, will never be 100% sustainable. Luckily, some companies are adapting to environmental and efficiency concerns by reducing water waste and shifting to sustainable energy. Yet, the overall level of AI use and lack of transparency in the numbers raise reasonable concerns about its environmental footprint.

Search

RECENT PRESS RELEASES

Related Post

![Electric haul trucks could save Fortescue over $400 million in fuel per year [update]](https://stockwatchindex.com/wp-content/uploads/2026/04/liebherr-t264-PVnOOS-500x383.jpg)