Linux lays down the law on AI-generated code, yes to Copilot, no to AI slop, and humans take the fall for mistakes — after months of fierce debate, Torvalds and maintainers come to an agreement

April 12, 2026

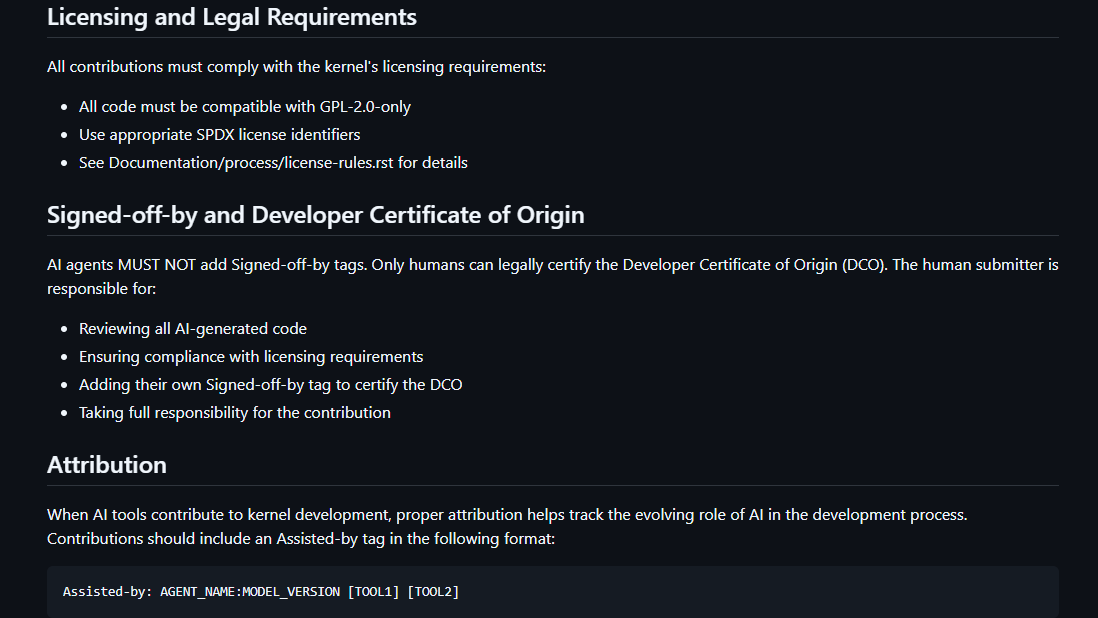

The open-source community’s long-simmering identity crisis over artificial intelligence just got a much-needed dose of pragmatism. This week, the Linux kernel project finally established a formal, project-wide policy explicitly allowing AI-assisted code contributions provided that developers follow strict new disclosure rules. The new guidelines mandate that AI agents cannot use the legally binding “Signed-off-by” tag, requiring instead a new “Assisted-by” tag for transparency. Ultimately, the policy legally anchors every single line of AI-generated code and any resulting bugs or security flaws firmly onto the shoulders of the human submitting it.

Article continues below

Until now, major projects have taken wildly different approaches to the AI question. Over the last two years, prominent Linux distributions like Gentoo, as well as venerable Unix distribution NetBSD, moved to outright ban AI-generated submissions. NetBSD maintainers famously described LLM outputs as legally “tainted” due to the murky copyright status of the models’ training data.

The core of this panic revolves around the Developer Certificate of Origin (DCO). As Red Hat pointed out in a thorough analysis late last year, the DCO requires humans to legally certify they have the right to submit their code. Because LLMs are trained on massive datasets of open-source code that often carries restrictive licenses like the GNU General Public License, developers using Copilot or ChatGPT can’t genuinely guarantee the provenance of what they are submitting. Red Hat warned this could inadvertently violate open-source licenses and shatter the DCO framework entirely.

Legal headaches aside, project maintainers have also been fighting a losing battle against sheer volume. The open-source world is currently drowning in what the community has dubbed “AI slop.” The creator of cURL had to close bug bounties after being flooded with hallucinated code, whiteboard tool tldraw began auto-closing external PRs in self-defense, and projects like Node.js and OCaml have seen massive, >10,000-line AI-generated patches spark existential debates among maintainers.

The cultural friction of undisclosed AI code has been even more volatile. Late last year, NVIDIA engineer and kernel maintainer Sasha Levin faced massive community backlash after it was revealed he submitted a patch to kernel 6.15 entirely written by an LLM without disclosing it, including the changelog. While the code was functional, it include a performance regression despite being reviewed and tested. The community pushed back hard against the idea of developers slapping their names on complex code they didn’t actually write, and even Torvalds admitted the patch was not properly reviewed, partially because it was not labeled as AI-generated.

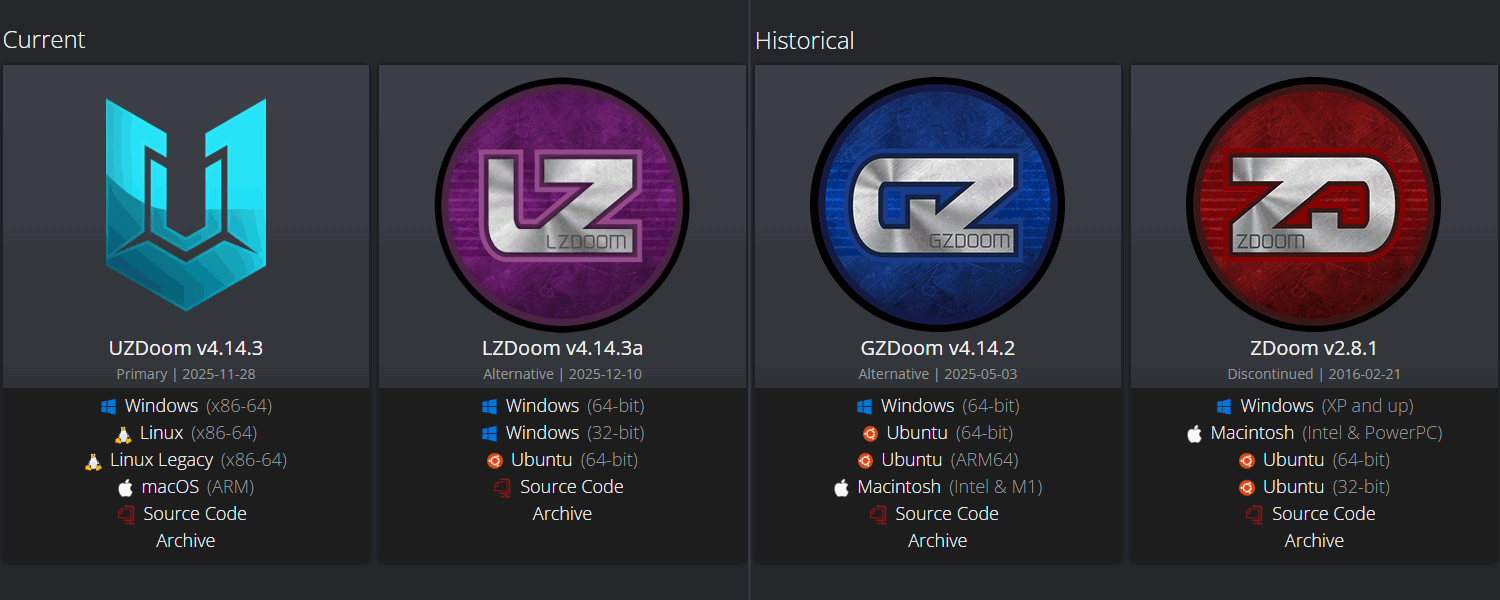

The Linux kernel isn’t the only community dealing with the fallout of undisclosed AI assistance. Over in the gaming sphere, the legendary (and still quite-alive) Doom modding community was cleaved in two last year as Christoph “Graf Zahl” Oelckers, the longtime lead developer of the mega-popular GZDoom source port, was caught using undisclosed AI-generated patches. When community members called him out on the lack of transparency, Oelckers took a remarkably cavalier attitude, essentially telling his critics to “feel free to fork the project.” The community called his bluff, resulting in the birth of the new UZDoom source port as the overwhelming majority of contributors to GZDoom fled to the new fork.

The GZDoom incident and the Sasha Levin backlash highlight exactly why the Linux kernel’s new policy is so vital. Most of the developer community is less angry about the use of AI and more frustrated about the dishonesty surrounding it. By demanding an Assisted-by tag and enforcing strict human liability, the Linux kernel is attempting to strip the emotion out of the debate. Torvalds and the maintainers are acknowledging reality: developers are going to use AI tools to code faster, and trying to ban them is like trying to ban a specific brand of keyboard.

The bottom line is, if the code is good, then it’s good. If it’s hallucinatory AI slop that breaks the kernel, the human who clicked “submit” is the one who will have to answer to Linus Torvalds. In the open-source world, that’s about as strong a deterrent as you can get.

Follow Tom’s Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Search

RECENT PRESS RELEASES

Related Post