Sam Altman Wants to Know Whether You’re Human

April 24, 2026

This is an edition of The Atlantic Daily, a newsletter that guides you through the biggest stories of the day, helps you discover new ideas, and recommends the best in culture. Sign up for it here.

The opening moments of the 1982 film Blade Runner introduce viewers to a world of artificially intelligent beings that are “virtually identical” to humans. To tell man from machine, people rely on something called the Voight-Kampff test, which is a little like a polygraph; robot irises exhibit subtle tells when prompted. If you’re dealing with a robot, you’ll know by the eyes.

If Sam Altman has his way, this could be sort of how it works in real life. Last week, he announced an expansion of the verification service World ID, created by a start-up called Tools for Humanity. Altman co-founded the company in 2019, the same year he became CEO of OpenAI. Onstage last Friday, he described the product as a way to certify personhood in a digital landscape rife with bots, deepfakes, phishers, and other sorts of impostors. Think of it as an evolution of CAPTCHA, the security program used to identify bots and prevent attacks on websites. To verify your humanness and secure a World ID, you must stare into a white, frosted orb and allow the company to take pictures of your face and eyeballs.

Orbs, as they’re officially known, are essentially basketball-size cameras that Tools for Humanity has placed in stores, restaurants, and other spaces around the world. They capture biometric information from your irises, encrypt it to protect your privacy, and use it to create a sort of digital passport that you can bring to various sites and apps: something that may evoke not just Blade Runner but also Minority Report,in which Tom Cruise’s character undergoes a back-alley eyeball transplant to avoid facial-recognition software.

I encountered an Orb in the wild this morning at a New York coffee shop, where it was installed just above a waxy succulent and a couple of jars of raw honey. After downloading the World app and holding my phone up to the device, I stared deep into its aperture; I told the person behind me not to mind—he could sidle past me and order his coffee. A few minutes later, the app informed me that I’d been granted human status.

Intrusive as the whole thing is, Altman’s invention is targeting a real issue. A few years ago, images and videos rendered by AI couldn’t consistently replicate the work of physical cameras; today, models can convincingly generate even the slightest details. As the CEO of the company that helped spur the AI revolution, Altman bears some of the responsibility for this manipulable era of internet communication. Now he’s selling a solution.

For all the potential positive effects that artificial intelligence may have on society—Altman has suggested that AI might one day cure cancer and offer free education to “everybody on Earth”—the tech is also making it significantly easier for us to lie to one another. Scammers were deploying bots online well before ChatGPT arrived, but the trend has dramatically accelerated in the age of AI. With a few simple prompts, anyone can summon up a team of realistic alter egos. At the same time, people are creating faceless digital butlers known as agents, which are already starting to populate digital spaces and can often pass for humans. Whether generative AI is deployed in the service of impersonation, scams, and misinformation (costing companies billions each year) or for more benign reasons, it is fundamentally changing how we use the internet.

Altman has been working on this project for a while. World ID is an outgrowth of Worldcoin, a cryptocurrency venture that launched in 2023 and rewarded users with tokens for their Orb scans. Worldcoin still exists, and you can still collect some crypto when you get verified, but the company has downplayed that aspect as its ideas have evolved (the words crypto and blockchain were not invoked during last week’s presentation). The sci-fi factor has persisted, even as the device has come to look a little friendlier. My colleague Kaitlyn Tiffany described an earlier, chrome-encased iteration of the Orb as “evil-looking.” When I asked her about the new version yesterday, she told me that it looks “like a street lamp.”

Tools for Humanity announced last week that Zoom and Docusign would start supporting Orb-backed verification for some users and that Tinder, which has already tested it out in Japan, would start rolling it out across the globe. The apps pay fees as people go through the authentication process; users aren’t charged. But as Wired revealed on Wednesday, the company also misrepresented one of its deals. As part of its effort to target bots’ role in ticket scalping, Tools for Humanity created an adjacent product called Concert Kit, meant to help musicians reserve a portion of their tickets for verified human beings. Press materials claimed that Bruno Mars’s world tour, which started this month, would be using it. Both Live Nation and the singer’s management team denied it, and Tools for Humanity has since walked back the claim. In a statement, the company told me that references to Bruno Mars “stemmed from a miscommunication to the Tools for Humanity team.”

That’s more than a little ironic, given that the start-up’s entire proposition revolves around trust. Its Orbs are meant to divine the real from the fake. If Tools for Humanity can’t reliably communicate with the people who may be asked to use it, how might it function as an arbiter of truth? When I asked Tiago Sada, Tools for Humanity’s chief product officer, why people should trust the Orb, he told me that they don’t have to. Once an Orb has taken pictures of your face and eyes and confirmed your humanity, he said, it transfers the encrypted biometric data to your phone and deletes the data from the Orb. The company has also open-sourced much of the security design, so people can assess its trustworthiness for themselves.

AI’s capacity for deception is improving each day, and it’s reasonable to argue that we’ll need some sort of human-verification process to guard against it. One new AI model from Anthropic is so powerful, and such a threat to international cybersecurity, that governments and major banks around the world have been scrambling to bolster their defenses. As the CEO of OpenAI and the chairman of Tools for Humanity, Altman has a financial interest both in the products that create these dangers and in the ones that guard against them. He’s better equipped than most to understand that despite technology’s abundant power, humans are, for now, still the designers. To trust the machines, people need to be able to trust one another too.

Related:

Here are three new stories from The Atlantic:

Today’s News

- The Justice Department said that it will end its criminal investigation into Federal Reserve Chair Jerome Powell over cost overruns tied to renovations at two Fed buildings, after a judge found little evidence of wrongdoing.

- Defense Secretary Pete Hegseth said that U.S. forces will maintain a blockade of Iranian ships and ports for “as long as it takes.”

- A U.S. Special Forces soldier who participated in the operation that removed Venezuelan President Nicolás Maduro from power was charged with using classified information to place bets on the prediction platform Polymarket, prosecutors said yesterday. Authorities allege that the soldier made more than $400,000 wagering on the outcome of the operation using insider knowledge.

Dispatches

- The Books Briefing: Contrary to what we think of as intellectual property, most ideas are difficult to trace back to one human mind, Boris Kachka writes.

Explore all of our newsletters here.

Evening Read

Theft Is Now Progressive Chic

By Thomas Chatterton Williams

In 1785, Immanuel Kant introduced his famous “categorical imperative.” Put simply: Act the way you want others to behave. This dictate, a version of the Golden Rule, has been a bedrock of moral philosophy for centuries. But for the New Yorker staff writer Jia Tolentino, Kant’s “categorical-imperative-type thing” no longer applies. Moral rectitude, in some left-wing corners of the commentariat, is out; flagrant disregard of the social contract is in.

Yesterday, The New York Times posted a video of a conversation featuring Tolentino, the pro-communist streamer Hasan Piker, and the Times opinion editor Nadja Spiegelman, under the headline: “The Rich Don’t Play by the Rules. So Why Should I?” It began with Tolentino, a highly successful author, admitting to shoplifting lemons from Whole Foods. “I think that stealing from a big box store—I’ll just state my platform—it’s neither very significant as a moral wrong, nor is it significant in any way as protest or direct action.”

More From The Atlantic

Culture Break

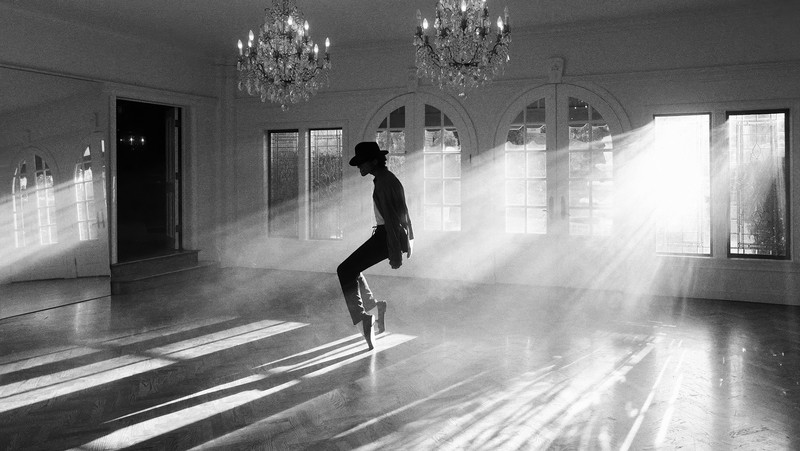

Watch. Michael (out now in theaters) is a warped and childish take on the life of Michael Jackson, Spencer Kornhaber argues.

Read. Stewart Brand’s Whole Earth Catalog was seen as a countercultural milestone, but his new book, Maintenance of Everything, reveals his alliances with the powerful, Alec Nevala-Lee writes.

*Illustration Sources: Fado / Smith Collection / Getty; Cundra / Getty; Colors Hunter – Chasseur de Couleurs / Getty.

Rafaela Jinich contributed to this newsletter.

When you buy a book using a link in this newsletter, we receive a commission. Thank you for supporting The Atlantic.

Search

RECENT PRESS RELEASES

Related Post