Amazon workers are gaming the AI leaderboard. HR built it.

May 13, 2026

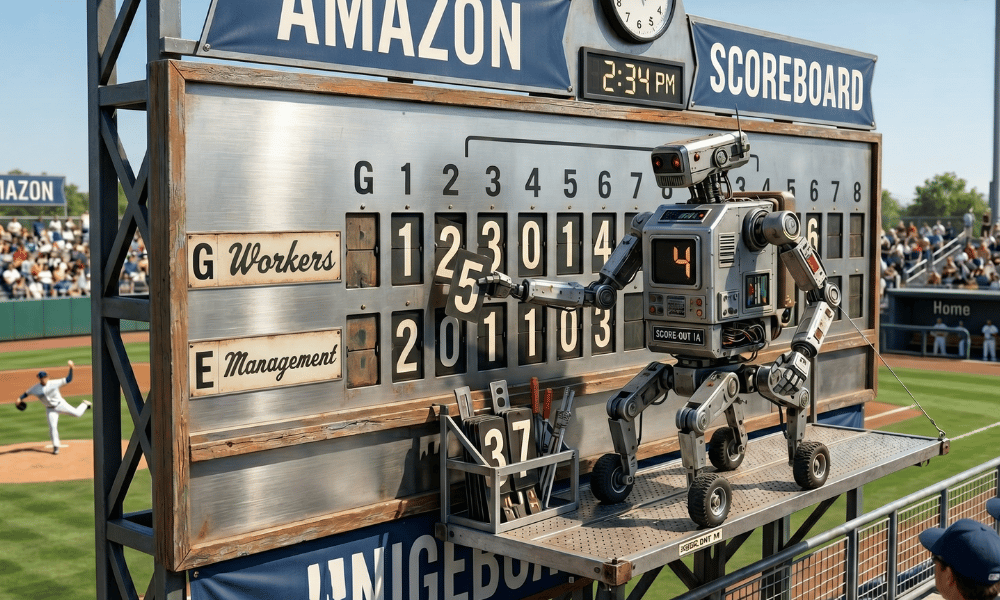

Inside Amazon’s “tokenmaxxing” scandal lies a textbook warning about what happens when companies measure AI adoption instead of AI value

Amazon employees are using an internal AI tool to run unnecessary, low-value tasks — not because the work needs doing, but because the activity inflates their scores on a company leaderboard tracking artificial intelligence usage.

The practice, which workers have taken to calling “tokenmaxxing,” was reported yesterday by the Financial Times and has since spread as a case study across the technology industry. It exposes, in unusually vivid terms, what happens when a productivity metric becomes a target: the metric stops measuring productivity, and starts measuring the human capacity for gaming.

READ MORE: Is your AI strategy driving employees away?

The episode is not merely a Silicon Valley curiosity. For HR leaders who are currently designing, implementing, or being asked to justify AI adoption programs — which is to say, most of them — it is a live demonstration of a failure mode that is easy to build and hard to reverse.

What Amazon Built, and What Went Wrong

Amazon had been widely deploying MeshClaw, an in-house agentic AI product that allows employees to create software agents capable of connecting to workplace tools and completing tasks on a user’s behalf. The bot can initiate code deployments, triage emails, and interact with applications including Slack, according to the Financial Times. An internal memo described it in terms that will be familiar to anyone who has sat through an AI all-hands: it “dreams overnight to consolidate what it learned, monitors your deployments while you’re in meetings and triages your email before you wake up.”

More than three dozen Amazon engineers worked on the tool. The company positioned it as empowering “thousands of Amazonians to automate repetitive tasks each day.”

But Amazon had also introduced targets requiring more than 80 percent of its developer workforce to use AI tools each week, and had begun tracking token consumption — the units of data processed by AI models, essentially a meter of how much the tools are being run — on internal leaderboards. Team-wide statistics were initially visible to all staff before being restricted so only employees and their managers could view them.

The result was predictable to anyone who has studied organisational behaviour. “There is just so much pressure to use these tools,” one Amazon employee told the Financial Times. “Some people are just using MeshClaw to maximise their token usage.” Another said the data was being watched regardless of official policy: “Managers are looking at it. When they track usage it creates perverse incentives and some people are very competitive about it.”

Amazon told the Financial Times that token statistics would not be used in performance evaluations. Workers did not believe it.

A Pattern Across Silicon Valley

Amazon is not alone. Meta employees engaged in similar tokenmaxxing behaviour, competing on an internal leaderboard called “Claudeonomics” that ranked the company’s roughly 85,000 workers by token consumption. In a 30-day window, total usage on the dashboard exceeded 60 trillion tokens. The leaderboard was taken down after reporting by The Information, but Meta’s CTO Andrew Bosworth had publicly endorsed the underlying logic — pointing to his best engineer spending the equivalent of their salary in AI tokens as evidence of a productivity multiplier.

At Microsoft, president Julia Liuson sent an internal memo saying AI use was “no longer optional, it’s core to every role and every level.” A company spokesperson later clarified there was “no formal review of an employee’s AI usage” — the kind of clarification issued when the original message lands harder than intended.

READ MORE: Workers’ AI errors proving costly to employers

A May 2026 CNBC report on workplace AI monitoring noted that “almost every Fortune 500 is tracking overall AI usage,” with tokens, prompt counts, licence activations, and seat-utilisation rates becoming standard surveillance inputs alongside older metrics like badge-swipe and keyboard activity.

The stakes behind the pressure are staggering. Combined 2026 capital expenditure from Amazon, Microsoft, Alphabet, and Meta is already tracking between $650 billion and $700 billion, with some Wall Street projections exceeding $1 trillion for 2027. Every executive leading a company that has made those commitments has an investor relations problem if adoption numbers look weak. Token counts are the answer — unless employees are manufacturing the counts themselves.

The HR Problem at the Center of This

The Amazon story is being described by analysts as a textbook case of Goodhart’s Law: the principle that when a measure becomes a target, it ceases to be a good measure. The moment token consumption was tied to leaderboards that managers could see, it stopped measuring AI productivity and started measuring competitive anxiety.

HR leaders designed this. Not maliciously — but the incentive structure that produced tokenmaxxing is a people management structure, not a technology one. Weekly usage targets, visible leaderboards, ambiguous signals about whether the numbers feed into performance reviews: these are HR design choices, and they have produced a predictable human response.

HRD has reported that 69 percent of executives believe refusing to adopt AI is a greater threat to someone’s job than AI itself, and that 59 percent say they would replace workers who resist. In that environment, an employee confronted with a leaderboard and an 80 percent usage target is not making a free choice about whether to adopt the technology. They are responding to a threat. The tokenmaxxing is the response.

HRD’s reporting on mandatory AI adoption found that only four percent of employers reported employee resistance as a barrier to AI adoption — yet nearly a quarter of workers said they would consider leaving a job if forced to use AI tools in ways they did not support. The gap between those two numbers describes the same dynamic: employees are complying visibly and resisting quietly. Tokenmaxxing is simply a more industrious version of that quiet resistance.

The Measurement Problem Is Also a Security Problem

Multiple Amazon employees told the Financial Times they were alarmed by the security profile of MeshClaw itself. The tool was granted permission to act on a user’s behalf — initiating code deployments, interacting with internal systems, sending communications. One employee said: “The default security posture terrifies me. I’m not about to let it go off and just do its own thing.”

This concern sits alongside the gaming problem rather than beneath it. An AI agent that employees are running on unnecessary tasks to inflate usage scores is an agent taking real actions in real systems — creating code deployments that did not need to happen, sending emails that did not need to be sent. The perverse incentive structure does not just produce fake productivity data; it produces real operational noise.

HRD has reported that 70 percent of managers observed at least one AI-related error from a direct report in the previous 12 months, with a pattern the researchers described as an “AI slop” crisis. Employees treating AI outputs as finished work rather than as a starting point. Add leaderboard pressure that rewards running AI more, not running it better, and the error rate compounds.

What HR Should Take From This

The Amazon episode arrives at a moment when CEOs are under board pressure to deliver measurable AI-driven outcomes, and that pressure flows downstream to HR through KPIs, adoption targets, and the implicit understanding that usage statistics will be scrutinised. That pressure is not going away. But the way it is being transmitted into the workforce is producing the opposite of what it intends.

Several practical observations for people professionals:

Measuring usage is not measuring value. Token counts, weekly active users, and seat-utilisation rates tell you whether employees are running the tools. They tell you nothing about whether the tools are producing better work. HRD has reported that even among companies seeing productivity gains from AI, roughly 37 percent of time saved is being consumed by rework — for every 10 hours gained, nearly four are lost correcting AI outputs. If the KPI is token consumption, none of that rework is visible.

Leaderboards drive performance theater, not performance. The Wells Fargo fake accounts scandal was a leaderboard problem before it was a compliance problem. Aggressive sales targets tied to evaluation produced the appearance of cross-selling success regardless of whether customers wanted the products. The mechanism at Amazon is structurally identical, scaled to AI and played out in tokens rather than accounts.

The ambiguity about whether metrics feed into reviews is the problem, not the solution. Amazon told employees that token statistics would not inform performance evaluations. Employees did not believe it, and behaved accordingly. Shadow AI research consistently finds that employees act on what they believe management is watching, not on what policy documents say. If there is any possibility that a metric feeds into decisions about someone’s career, they will optimise for it.

READ MORE: Shadow AI rises as employees outpace workplace controls

Transparency is the variable that changes behaviour. Research cited by HRD found that 92 percent of desk workers in organisations with a clearly communicated AI strategy reported productivity gains. The companies performing best on AI adoption are not the ones with the most aggressive targets — they are the ones in which employees understand why the technology is being deployed and what it is expected to produce. “When leaders are transparent, employees lean in and performance follows,” according to EY’s Global Consulting AI lead.

And more managers than ever believe AI can replace their direct reports. The share who agreed that replacing employees with AI tools was a good thing rose from 23 percent in 2025 to 35 percent in 2026. In that environment, an employee who sees a leaderboard tracking their AI usage is not imagining the threat. The HR response to tokenmaxxing is not to take down the leaderboard and move on. It is to ask what the leaderboard communicated about what the organisation values — and whether that is actually what you want employees to believe.

Amazon spent $200 billion this year to make AI central to how its employees work. The tokenmaxxing problem did not cost a dollar to build. It came free, with the leaderboard.

LATEST NEWS

Free newsletter

Our daily newsletter is FREE and keeps you up-to-date with the world of HR.

Please complete the form below and click on subscribe for daily newsletters from HRD America.

Search

RECENT PRESS RELEASES

Stalemate over Virginia Adult-Use Cannabis Bill – Governor Faces May 22, 2026 Deadline

SWI Editorial Staff2026-05-13T08:17:16-07:00May 13, 2026|

Suit over lost cannabis crop barred by economic loss rule

SWI Editorial Staff2026-05-13T08:05:44-07:00May 13, 2026|

Amazon workers are gaming the AI leaderboard. HR built it.

SWI Editorial Staff2026-05-13T08:03:56-07:00May 13, 2026|

Amazon expands delivery reach across Maine

SWI Editorial Staff2026-05-13T08:01:59-07:00May 13, 2026|

RWA news: Animoca-backed NUVA brings Figure’s $19 billion of tokenized assets to Ethereum

SWI Editorial Staff2026-05-13T08:00:00-07:00May 13, 2026|

Road Tripping with Pets? It Starts with These Amazon Pet Days Deals

SWI Editorial Staff2026-05-13T08:00:00-07:00May 13, 2026|

Related Post